Why agricultural robotics fails without functional safety engineering

Agricultural robotics does not usually fail because the algorithm is weak or because the prototype cannot move in a field. It fails because once the machine must operate near people, crops, implements, obstacles, network gaps and regulatory requirements, the project stops being only an autonomy problem and becomes a functional safety issue.

For founders, CTOs, production managers and machinery OEM teams, this changes the investment logic. If safety engineering is added after perception, navigation, and HMI have already been built, teams often face the redesign of the architecture, interfaces, validation plans, and even commercial timelines. Safety-based technical standards such as ISO 25119 and ISO 18497, together with Regulation (EU) 2023/1230, are not paperwork around the product; they shape the product itself.

Why do agricultural robots and their safety matter so much right now?

Agricultural robotics is gaining momentum because the sector is under pressure from labour shortages, sustainability requirements and the need for more precise field operations. The OECD notes that agriculture in the EU provides over 30 million jobs, yet faces an ageing and shrinking workforce. Meanwhile, the EU CAP Network, aside from labour shortages, also identifies interoperability issues, regulatory compliance, and farmers’ readiness as major barriers to the implementation of robotics and artificial intelligence.

That context matters because it clarifies why so many robotics initiatives receive attention, funding and pilot interest. It also explains why failure is expensive. In agriculture, a robot is not a lab system operating in a controlled aisle. It lives on farms and moves through mud, dust, variable light, crop rows, headlands, slopes, people, vehicles and attachments. The environment is dynamic, and the acceptable margin for unsafe behaviour is low.

The main problem: robotics teams build autonomy before they apply safety requirements

Many teams start with a sensible technical question: can the machine detect, decide. and act? That is necessary, but it is not enough. In real deployments, the harder question is different: what must the machine do, and what must it never do, when sensors degrade, communication drops, localisation drifts, an operator approaches, or a safety function is triggered?

This is where projects begin to stall. A prototype can often demonstrate route following, crop detection, or targeted actuation. But once the programme moves towards validation, scaling or customer rollout, the team discovers that hazard analysis, safety-related control architecture, degraded modes, traceability and verification evidence were not built into the development process from the start. At that point, safety stops being a final review and becomes a redesign programme.

What usually goes wrong

Before a robotics team sees the problem clearly, it often appears as a delay, cost growth, or “unexpected certification work”. In reality, the pattern is usually one of the following:

- The autonomy stack was designed without clear safety boundaries between mission logic and safety logic.

- Sensing was optimised for task performance, not for safety function verification.

- HMI flows were designed for convenience, not for safe operator intervention.

- Failure handling was assumed, but not specified, tested, or evidenced.

- Software teams and compliance teams worked in sequence rather than together.

The commercial consequence is simple. A system that looks advanced in demo conditions may still be immature as a product.

What does functional safety mean in agricultural robotics?

Functional safety is often misunderstood as a narrow compliance discipline. In practice, it is an engineering method that ensures a control system correctly performs safety-related functions, even under fault conditions. In agricultural machinery, ISO 25119 sets the general principles for the design and development of safety-related parts of control systems, often referred to as SRP/CS.

That matters especially in robotics because agricultural machines are no longer simple mechanical assets with limited control logic. They are software-defined systems that combine perception, planning, actuation, connectivity, and operator interaction. ISO 18497 extends the discussion to machinery with partially automated, semi-autonomous and autonomous functions, and explicitly addresses significant hazards under intended use and reasonably foreseeable misuse.

A plain-language definition

At first use, the term can sound abstract, so it helps to state it simply. Functional safety asks: if something goes wrong, will the machine still move to, or remain in, a safe state in a predictable way? That includes stopping, limiting motion, disabling an implement, requesting human intervention, or preventing an unsafe start condition.

Learn more about our Functional Safety expertise for automotive

Check for moreWhy is it different from general software quality?

Good software quality reduces bugs. Following Functional Safety standards reduces hazardous behaviour. A stable application can still be unsafe if it makes a wrong but deterministic decision during degraded sensing, timing drift, or actuator fault. That is why safety engineering needs its own concept phase, architecture discipline, verification method and evidence trail.

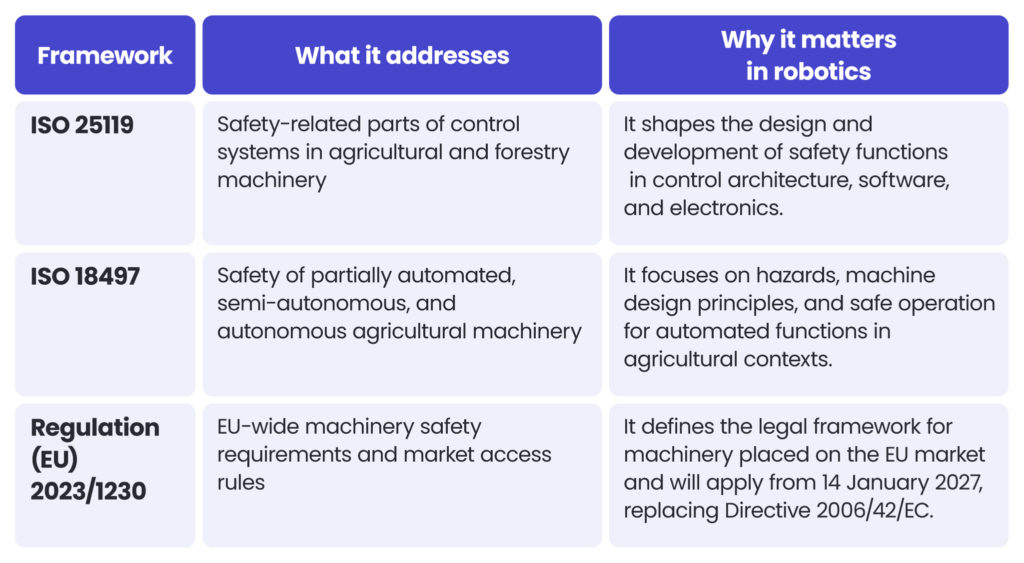

Safety-related technical standards: ISO 25119, ISO 18497 and the EU Machinery Regulation

Key safety standards for agricultural robotics are ISO 25119, ISO 18497 and the EU Machinery Regulation. These three references are often mentioned together, but they do not do the same job. Teams make better design choices when they understand the difference early.

What each framework covers

This distinction is important because robotics teams often treat regulation as a late-stage legal matter. That is incorrect. The legal framework and the technical safety standards influence software partitioning, interfaces, fault responses, documentation, and validation strategy from the concept phase onward.

A note on timing

The transition window is not theoretical. Regulation (EU) 2023/1230 entered into force after publication and will apply from 14 January 2027, with some provisions applying earlier. Teams building systems for commercial deployment, OEM integration, or EU scaling plans need to design solutions with that in mind now, rather than waiting until after the successful completion of the pilot phase.

The practical reading for product teams

For product teams, the implications are direct. If your roadmap includes autonomous navigation, remote supervision, automated implement control, human-machine interaction, or connected updates, then safety is no longer a document set around the machine. It is an integral part of the system design.

Why agricultural robotics projects stall in practice

The first reason is that agricultural environments are hard to model and even harder to bound. Weather changes visibility, crops change geometry, terrain changes traction, attachments change the system state, and human behaviour remains difficult to predict. Research reviews continue to identify safety, especially human detection and safe field operation, as a central challenge in agricultural robotics.

The second reason is that robotics in agriculture is a system-of-systems problem. A product may include navigation, machine control, perception, HMI, telemetry, geospatial data, cloud analytics, and service workflows. Safety has to be coherent across those layers. A safe braking function can be undermined by unclear state handling, poor operator messaging, or an unsafe restart path.

The third reason is organisational. Prototype teams are often structured around speed, rather than certification capabilities. That is rational at the start, but it becomes dangerous once the architecture starts to solidify. Every month spent building autonomy without safety assumptions increases the risk of having to redo the work later.

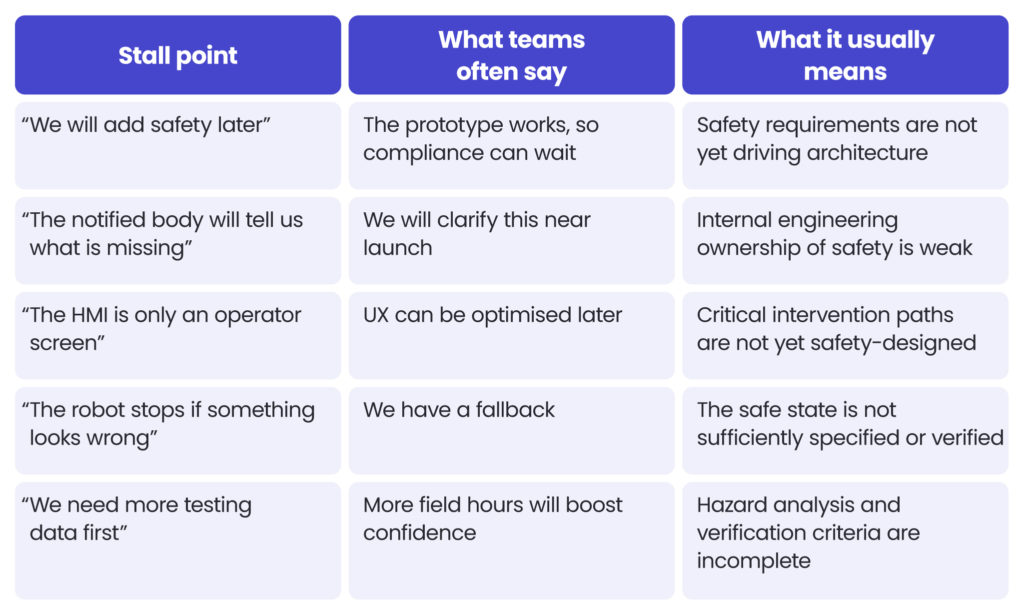

The most common stall points

A practical way to spot danger is to look for repeated friction in the same areas:

This pattern is common because teams conflate operational performance with acceptable risk. In safety engineering, those are related but not identical.

Functional safety as a business issue, not only an engineering issue

For production managers and OEM decision-makers, the issue is not only whether the robot can work. It is about whether the product can be industrialised, supported, insured, integrated, and sold at an acceptable level of risk. When safety is addressed late, product economics shift in several ways: timelines extend, test cycles multiply, hardware changes propagate back into software, and documentation effort becomes harder to reconstruct.

For investors, the signal is equally important. A robotics company that shows autonomy performance without a credible functional safety path may still be technically impressive, but it is not yet de-risked as a deployment business. In that sense, safety maturity is part of commercial maturity.

Where the business impact appears first

The first business symptoms are rarely labelled “functional safety”. They usually appear as:

- repeated pilot extensions instead of commercial conversion,

- customer hesitation around supervision and liability models,

- slower OEM discussions because architecture assumptions are unclear,

- difficulty moving from one machine configuration to a broader product family,

- rising cost of validation, testing, and documentation recovery.

What different stakeholder groups gain from solving this early

In agricultural robotics, each stakeholder group sees the same safety gap through a different lens.

For production managers

Production managers need a machine that can move from prototype to repeatable delivery. For them, early functional safety reduces late engineering churn, helps stabilise bill of materials decisions, and improves confidence that manufacturing, commissioning, and service documentation will not change every few weeks. The benefit is fewer surprises when the product approaches the scale-up stage.

For CTOs

CTOs need architecture that remains coherent as product ambition grows. For them, safety-led design creates clearer boundaries between autonomy logic, safety logic, connectivity, and operator interaction. The benefits include lower rework, better verification discipline, and a product stack that can evolve without undermining core safety assumptions.

For technical product owners

Technical product owners need a roadmap that aligns product goals with real delivery constraints. For them, functional safety turns vague risk into defined work packages: hazard analysis, safety requirements, traceability, verification planning, HMI behaviour, and evidence generation. The benefit is a backlog that supports deployment, not just demos.

For digital managers in agriculture

Digital managers often sit between operations, suppliers, and transformation programmes. Functional safety standards can help them recognise why robotics cannot be treated like a normal software rollout. The benefits are better vendor evaluation, more realistic milestones, and stronger questions during procurement or partnership discussions.

For agricultural machinery manufacturers

OEMs and machinery producers need systems that can fit their product portfolio, certification path, and after-sales model. For them, functional safety is the bridge between innovation and marketable machinery. The benefit is faster alignment between embedded software, machine control, HMI technology, compliance, and serviceability.

For founders and investors

Founders want speed, and investors want scale. Early safety engineering helps both, because it reduces the chance that a seemingly promising robotics platform will get trapped between pilot success and commercial readiness. The benefit is a clearer route from field trial to revenue-grade product.

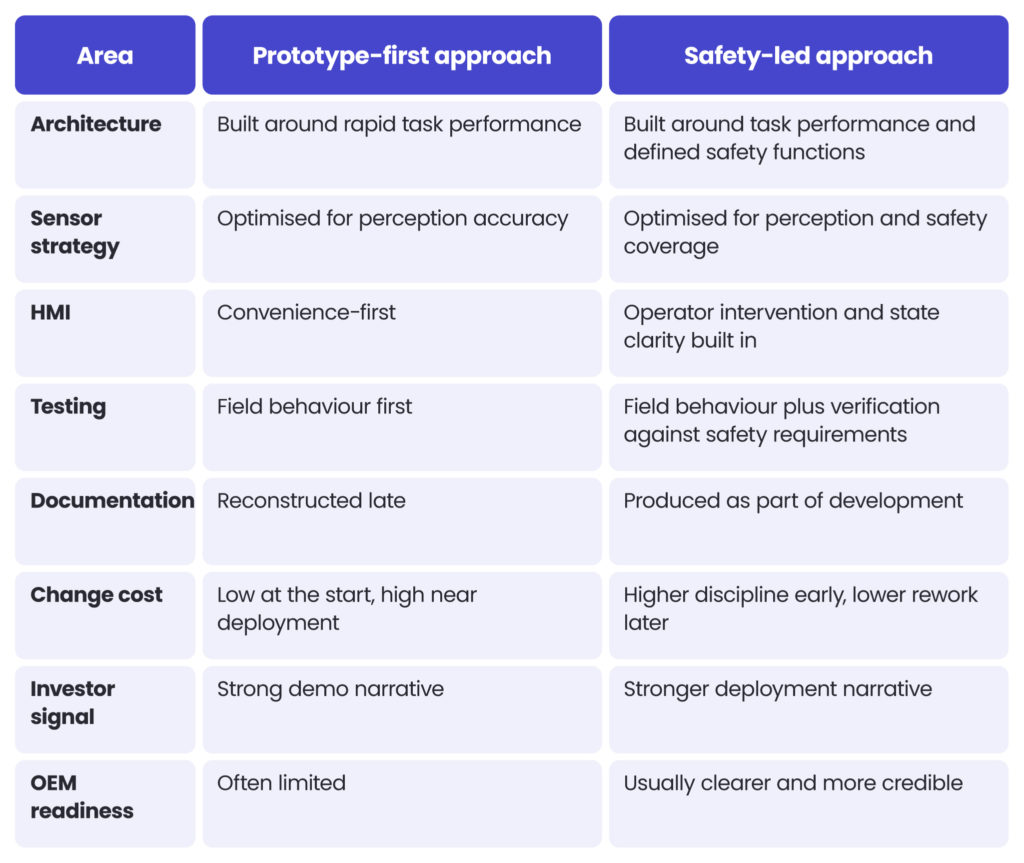

Comparison: prototype-first robotics vs safety-led robotics

A direct comparison makes the difference easier to see. Neither model guarantees success, but one of them allows for structural risk reduction.

That is the core trade-off. A prototype-first path looks faster in the first months. A safety-led path tends to be faster across the full route to a product that can actually be deployed, supported, and expanded.

Step-by-step: how to structure a safer robotics programme

A clearly defined process is helpful because many teams recognise the importance of safety but don’t know how to embed it. The goal of functional safety engineering is not to slow down innovation, but to prevent the rapid development of a flawed system.

- Define the operational envelope early

Start by describing where the machine is meant to operate, who may be nearby, what implements are involved, and what environmental variation is expected. This sounds obvious, but safety analysis weakens when “field operation” is treated as a single generic use case.

- Separate mission logic from safety logic

Next, make an explicit architectural distinction between what the robot is trying to achieve and what must constrain or stop it. A machine can continue to improve its autonomy stack, but safety decisions should not remain ambiguous within that stack.

- Perform hazard analysis before architecture hardens

Hazard analysis should happen before interfaces become expensive to change. If the team waits until after navigation, HMI, and actuation are integrated, even simple safety findings can force a broad redesign.

- Define safe states and degraded modes

A robot should not merely “fail safely” as a slogan. It should be applied in line with safety regulations and have specified and testable behaviour for sensor loss, communication loss, localisation uncertainty, actuator anomalies, manual override, and restart conditions.

- Build traceability from requirement to proof testing

Once safety requirements exist, each one needs traceability through design, implementation, and verification. This is where many software-heavy teams struggle, because the work feels bureaucratic until they need proof.

- Treat HMI as a safety surface

The operator view, alerts, override logic, and state messaging are not cosmetic layers. In automation systems, HMI affects whether a human understands the machine state quickly enough to intervene correctly. A proper approach to agritech HMI should reflect this integration logic clearly: interfaces must be connected to machine behaviour and relevant data, not treated as stand-alone screens.

- Validate with deployment in mind

Finally, validate not only for technical correctness but also for the intended route to market. A pilot on one of the farms is not the same as a product family deployed across machines, geographies, and operational variants.

Case study: from field prototype to certifiable product logic

A case study is useful here because the argument becomes clearer when tied to a real robotics trajectory. Spyrosoft has presented work with Small Robot Company, a British agritech business building robots intended to transform field operations, using technologies including Java, React and GeoServer.

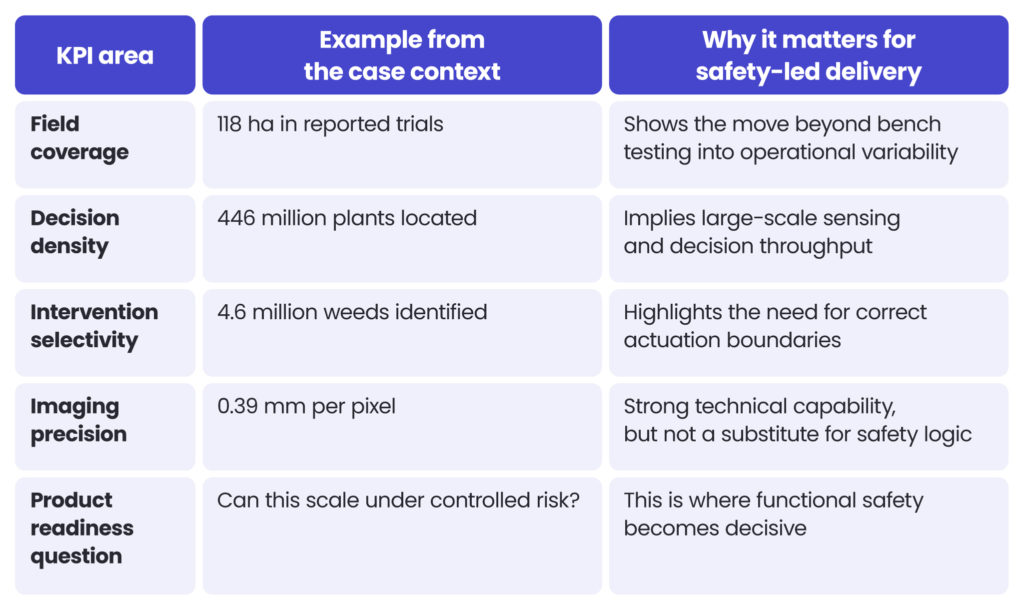

The public operating context around that programme helps explain the scale challenge. Small Robot Company reported on-farm trials across 118 hectares, locating 446 million wheat plants and identifying 4.6 million weeds, with imaging at 0.39 mm per pixel from a six-camera setup. That equates to roughly 3.78 million plants per hectare, 38,983 identified weeds per hectare, and a weed-to-plant ratio of about 1.03%.

Why these numbers matter

Those figures are not just agronomic or computer vision metrics. They show the operational density with which safety engineering must coexist. When a system is making decisions at the plant level across millions of field objects, safety cannot be bolted on as a final gate. It must shape how sensing confidence, actuation authority, operator supervision, and degraded mode logic are handled. A system operating at that level of granularity still needs predictable behaviour when localisation is uncertain, when a person enters the work zone, or when a subsystem becomes unreliable.

Practical KPI interpretation

A useful way to read the case is through deployment KPIs rather than pure AI metrics:

The lesson for OEMs and robotics founders

The lesson is not that advanced robotics is too risky. The lesson is that once a machine moves from promising capability to real deployment intent, the key maturity test becomes: can the system demonstrate safe behaviour by design, not only task intelligence by demonstration?

How Spyrosoft can support functional safety standards in this area

Thanks to our expertise in both agritech and Functional Safety, we can provide engineering and software expertise for robotics, automation, and control systems used in agricultural machinery, including autonomous tractors, robotic harvesters, and specialist agribots, with an emphasis on safe, reliable systems aligned with international safety standards and requirements.

Where Spyrosoft creates value

With our vast experience, we can handle projects where the challenge sits between software ambition and deployment reality. In the context of agricultural robotics, that means support in areas such as:

- embedded and control software for machine behaviour and safety-related functions,

- HMI design for clear operator state awareness and intervention,

- integration across autonomy, telematics, cloud, and machine subsystems,

- requirements engineering, traceability, and verification discipline,

- product delivery that fits certification-grade expectations rather than demo-only expectations.

Benefits for different audiences

For CTOs, this means architecture that is easier to scale and defend. For machinery manufacturers, it means stronger alignment between product software, machine logic and compliance expectations. For technical product owners, it means a clearer decomposition of safety-critical work into implementable backlog items. And for investors and founders, it means a more credible path from prototype traction to industrial readiness.

Practical points and tips for robotics teams on functional safety assessment

Since safety topics become clearer when broken down into specific checkpoints, below we present notes and questions that can help your team prepare a risk assessment and determine whether safety remains merely an intention or has already become an integral part of the delivery.

Product readiness checklist

- Have we defined the operational envelope in sufficient detail to properly analyse hazards?

- Have we separated mission logic from safety logic in architecture?

- Have we identified safety-related functions, triggers, and safe states?

- Have we defined degraded modes for sensing, localisation, communications, and actuation?

- Have we designed operator intervention and restart flows explicitly?

- Do we have traceability from the safety requirement through implementation to testing?

- Is our HMI part of the safety concept, not only part of usability?

- Are we designing for the EU regulatory route that we actually intend to use?

- Can we explain our safety case clearly to an OEM, auditor, partner, or investor?

Warning signs that the programme is drifting

- The prototype works, but nobody can explain the safe state precisely.

- Testing is extensive, but the pass criteria are not tied to safety requirements.

- Autonomy and compliance are handled by separate teams with little shared ownership.

- An operator override exists, but the system state is difficult to interpret.

- Product milestones focus on features, not on verified deployment risks.

Summarising functional safety needs in agricultural robotics

Agricultural robotics fails without functional safety engineering because the machine doesn’t live in a closed technical problem. It lives in a real operational environment shaped by people, implements, variable terrain, uncertain sensing, service conditions, and legal obligations. That is precisely why companies should make a decisive shift from the question “Can the robot perform this task?” to the question “Can the robot perform this task safely, predictably, and repeatedly under real-world conditions?

ISO 25119, ISO 18497 and the EU Machinery Regulation are key because they force that question into the design process. Teams that treat them as end-stage compliance tend to discover hidden architecture debts too late. Teams that build around them earlier are usually better positioned to industrialise, integrate, and scale.

For Spyrosoft, this is exactly where engineering value becomes visible. Our focus is not only on building agritech software technology that works as a demo, but also on supporting the creation of robotics software, interfaces, and integrations that are robust enough for real-world product use. In agricultural robotics, that is the difference between an impressive idea for automation and deployable machinery.

If you are evaluating an autonomous or semi-autonomous agricultural machine programme, review the safety architecture before you expand the feature roadmap or reach out to our experts for assistance in that area. And remember to do this sooner rather than later to avoid unexpected risks or costs, and ensure system safety.

Glossary

Functional safety – A discipline that ensures safety-related control functions behave correctly, including under fault conditions.

SRP/CS – Safety-related parts of control systems. In practice, these are the parts of a machine’s control system that perform safety functions.

Safe state – A defined machine condition that reduces risk, such as a controlled stop, motion limitation, or actuation disablement.

Degraded mode – A reduced operating mode used when part of the system becomes unavailable or unreliable, while maintaining acceptable risk.

Hazard analysis – A structured process for identifying dangerous situations, causes, and risk reduction needs.

HMI – Human-machine interface. In agricultural machinery, this includes displays, controls, alerts, and intervention paths used by operators.

Operational envelope – The conditions in which the robot is intended to operate, including terrain, crops, weather, supervision model, and nearby actors.

Sources

ISO, ISO 25119-1:2018 – Tractors and machinery for agriculture and forestry – Safety-related parts of control systems – Part 1: General principles for design and development. (ISO)

ISO, ISO 18497-1:2024 – Agricultural machinery and tractors – Safety of partially automated, semi-autonomous and autonomous machinery – Part 1: Machine design principles and vocabulary. (ISO)

EUR-Lex, Regulation (EU) 2023/1230 on machinery. (EUR-Lex)

FAQ

Functional Safety is crucial because agricultural robots operate in open, variable and sometimes hazardous environments. The combination of moving machinery, attachments, people, crops and changing field conditions creates risks that cannot be handled by autonomy performance alone.

ISO 25119 focuses on safety-related parts of control systems in agricultural machinery. ISO 18497 focuses on the safety of partially automated, semi-autonomous, and autonomous machinery, as well as the hazards associated with these modes of operation.

Regulation (EU) 2023/1230 applies from 14 January 2027, with some provisions applying earlier.

No. A strong AI model may improve perception or decision support, but it does not replace safety architecture, defined safe states, traceability, verification, and regulatory alignment.

A common mistake is to treat safety as something that can be documented after autonomy is already designed. In practice, late safety work often exposes architectural issues that are expensive to fix.

Ask how safety-related functions are defined, how degraded modes are handled, how operator intervention works, what standards guide development, and how verification evidence is produced. Those questions are often more revealing than a polished demo.

arrow_circle_rightContact us

Let’s discuss how we can help you with your agritech projects

arrow_circle_right Our articles