Alpamayo: NVIDIA’s reasoning AI for autonomous vehicles – what decision-makers need to know

NVIDIA’s Alpamayo is the first autonomous vehicle AI that explains every driving decision in plain text, in real time. This article covers the architecture, our test results, and what it means for your organisation.

The problem that kept autonomous driving stuck

For more than a decade, autonomous vehicle development has relied on imitation learning: train a model on millions of hours of footage, and it learns to drive like a human. It works well in common situations. It fails in rare ones – the long tail of unpredictable scenarios that do not appear often enough in training data to be learned reliably. No amount of additional data fully solves this, because a truly novel situation has no historical example to learn from.

The second problem is opacity. When a traditional AV system makes a wrong decision, you cannot ask it why. For regulators, fleet operators, and insurers, that is a direct barrier to certification and commercial deployment.

What Alpamayo is – three pillars, one architecture

Alpamayo is an open-source development portfolio built around three components that work together: the Alpamayo 1 AI model, the AlpaSim simulation framework, and the Physical AI open datasets.

Alpamayo 1: a model that explains its decisions

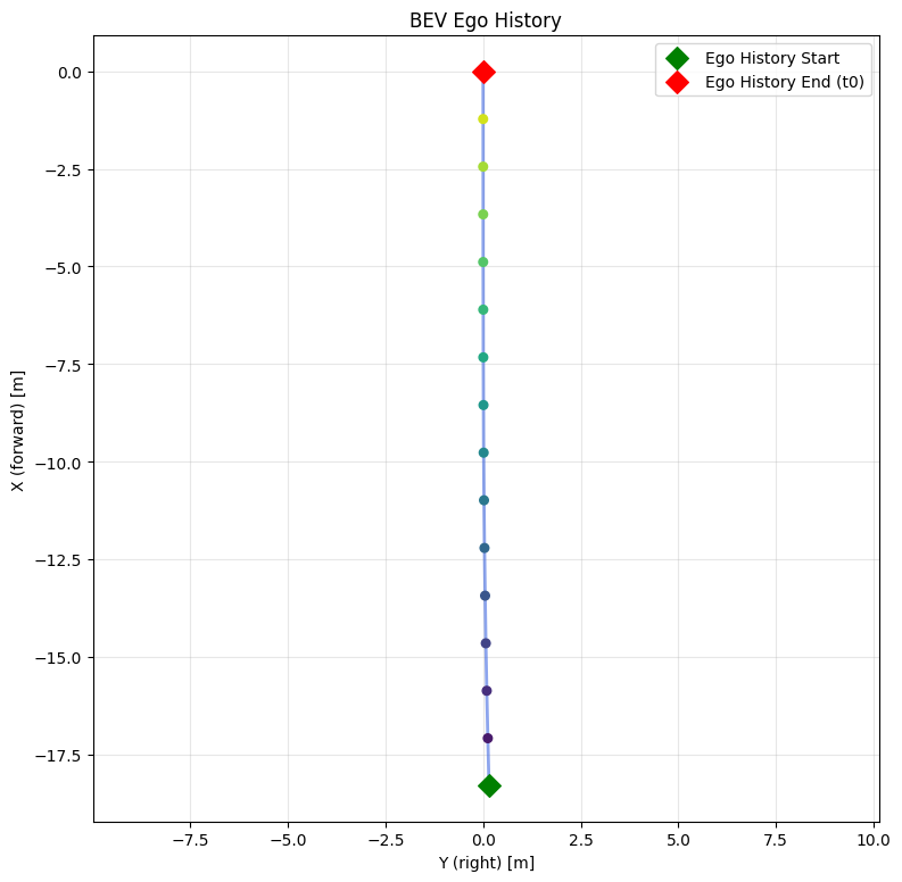

Alpamayo 1 is a Vision-Language-Action (VLA) model with 10 billion parameters. It takes multi-camera sensor input (front, left, right, and rear cameras) alongside the vehicle’s recent movement history (position and rotation sampled at 10Hz over the last 1.6 seconds), and produces both a text-based reasoning trace and a physical trajectory.

The reasoning trace is the key difference. Before generating any motion output, the model writes out why it is making its decision. This is the Chain-of-Causation (CoC). In real test scenarios, the model produces outputs such as: “Change to the left lane to stay in the through lane and avoid being forced into the right-turn slip lane at the upcoming intersection, while moderating speed to maintain gap to the lead vehicle and approach the marked crosswalk cautiously.” Or, at a traffic light: “Stop at the stop line – the straight traffic light is red.”

This is not a post-hoc summary. The reasoning is generated first and directly informs the trajectory output. Because the model works from understood concepts (not memorised patterns) it can apply the same logic to situations it has never encountered before.

For vehicle trajectories, discrete token outputs create imprecision. Alpamayo 1 uses flow-matching diffusion models to generate trajectories as continuous values instead – producing smooth, physically realistic motion planning.

AlpaSim and the Physical AI dataset

AlpaSim is a closed-loop simulation environment built on neural reconstruction of real-world scenes. It allows development teams to test against realistic road conditions without requiring physical test miles for every scenario. The Physical AI open dataset provides the multi-sensor real-world data that grounds both training and simulation in physical reality. Together, they significantly reduce the time between initial development and fleet-ready validation.

Discover our AI-driven development services

Find out how we can help youPutting it into practice: what running Alpamayo looks like

Alpamayo 1 is not a research paper. The model weights are available on Hugging Face and can be deployed today. Our team downloaded the 10B model weights (originally released at NeurIPS in December 2025) and ran the full inference pipeline on our DGX Spark, feeding it real multi-sensor inputs from the Physical AI AV dataset.

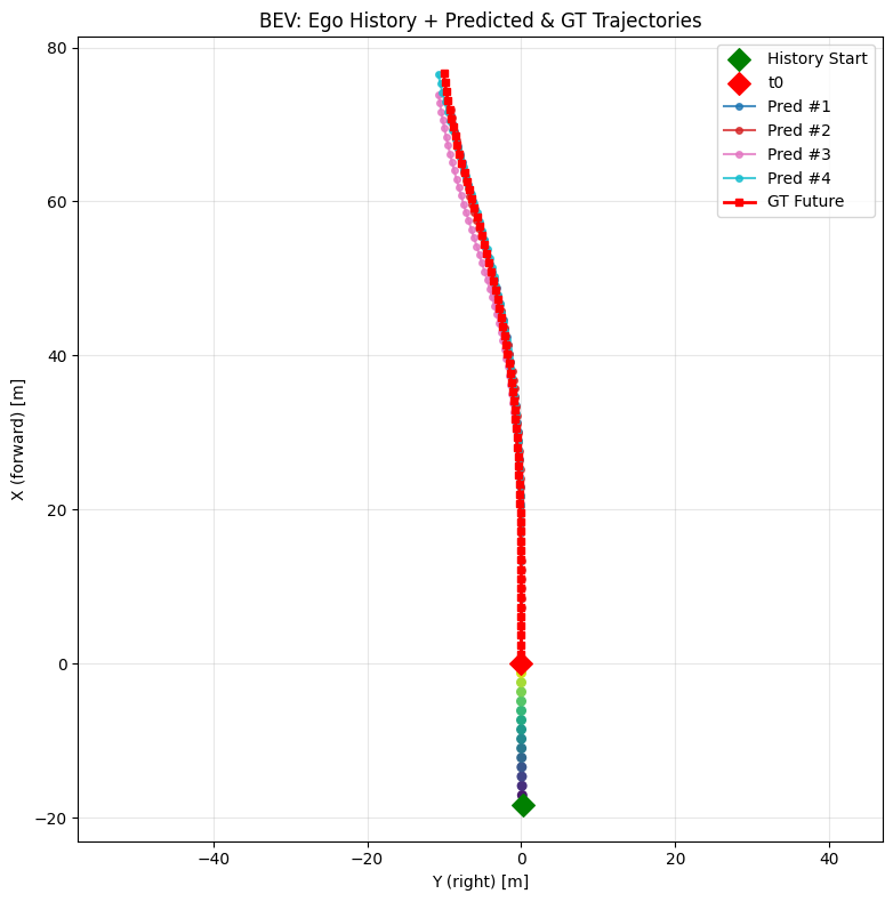

The setup involves passing a tokenised input dictionary – camera images from multiple viewpoints, attention masks, and ego-trajectory history sampled at 10Hz over the preceding 1.6 seconds – alongside a system prompt instructing the model to act as a safety-focused driving system. The model generates a Chain-of-Thought reasoning sequence first, then produces the corresponding continuous trajectory prediction.

Scenario 1: stopping at a red light

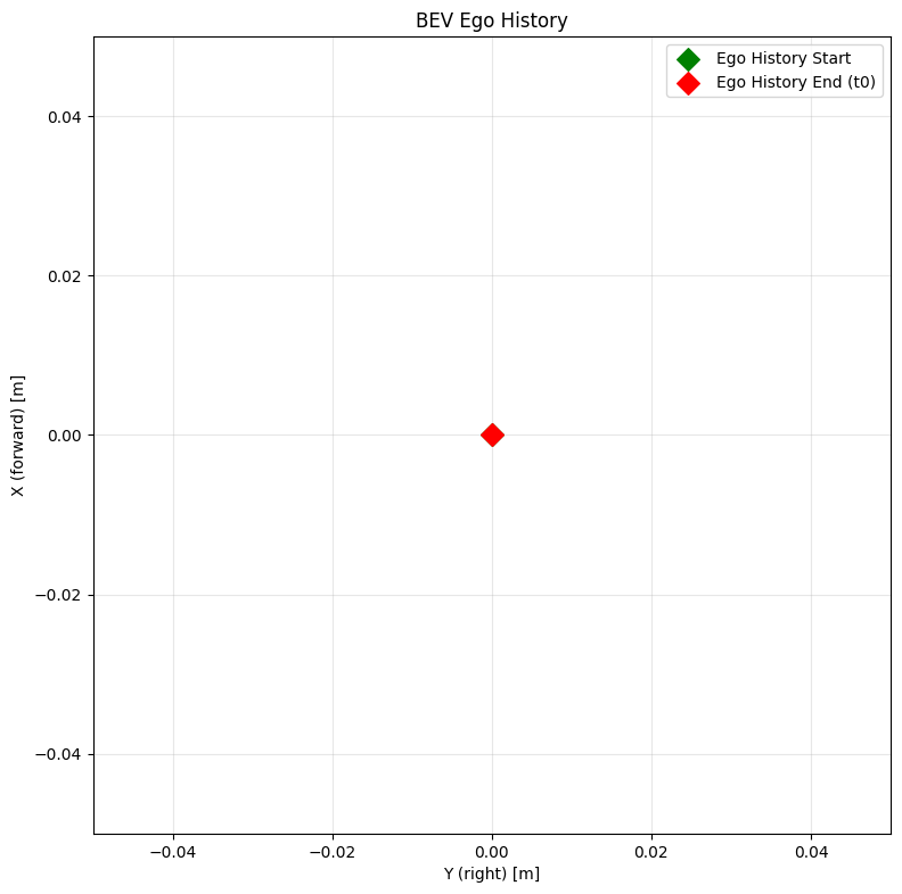

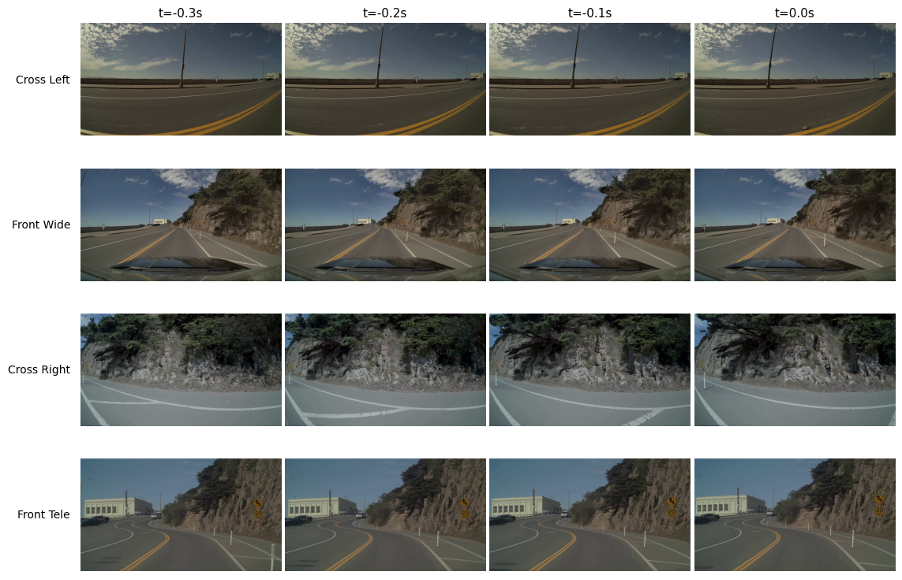

The vehicle is at an intersection. The front camera clearly shows a red traffic light. The model ingests four camera angles over the most recent 0.3 seconds and generates the following reasoning before outputting any trajectory.

Chain-of-Causation output – generated by Alpamayo 1 during our test:

- “Stop at the stop line since the straight traffic light is red.“

- “Accelerate to proceed through the intersection since the straight traffic light turns green.“

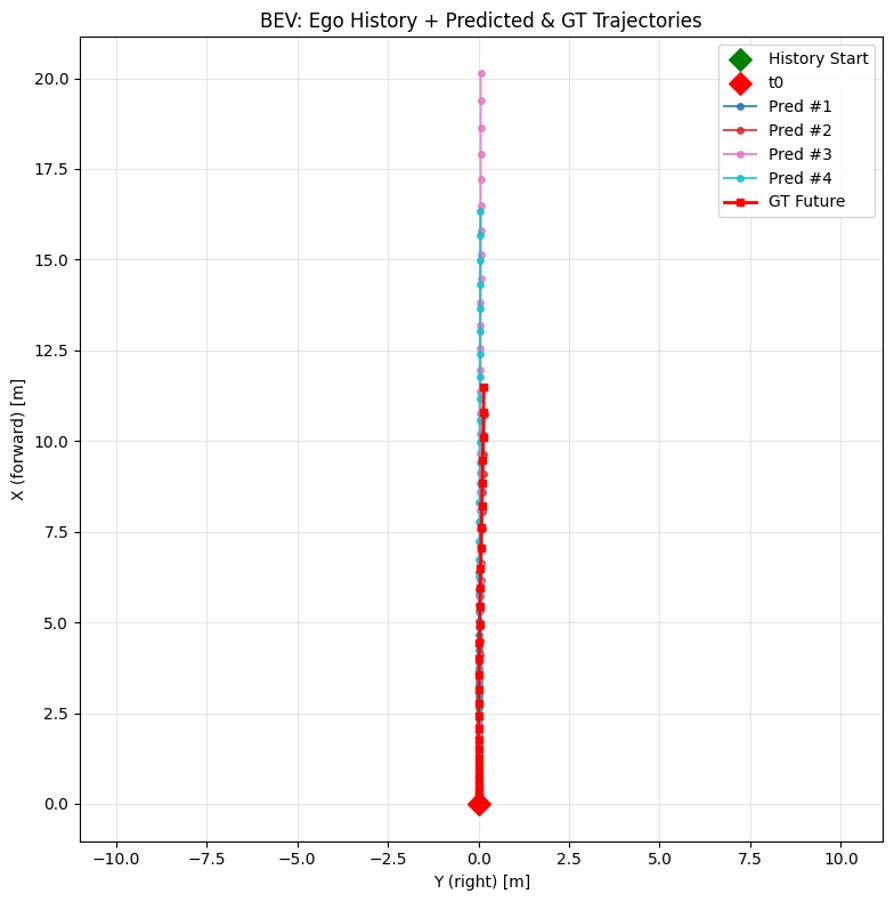

The camera grid below shows the multi-view input the model received at t=0.0s. The trajectory plot confirms the model’s predicted paths (Pred #1–4) align tightly with the ground truth future path (GT Future) – a straight stop at the line, with no lateral deviation.

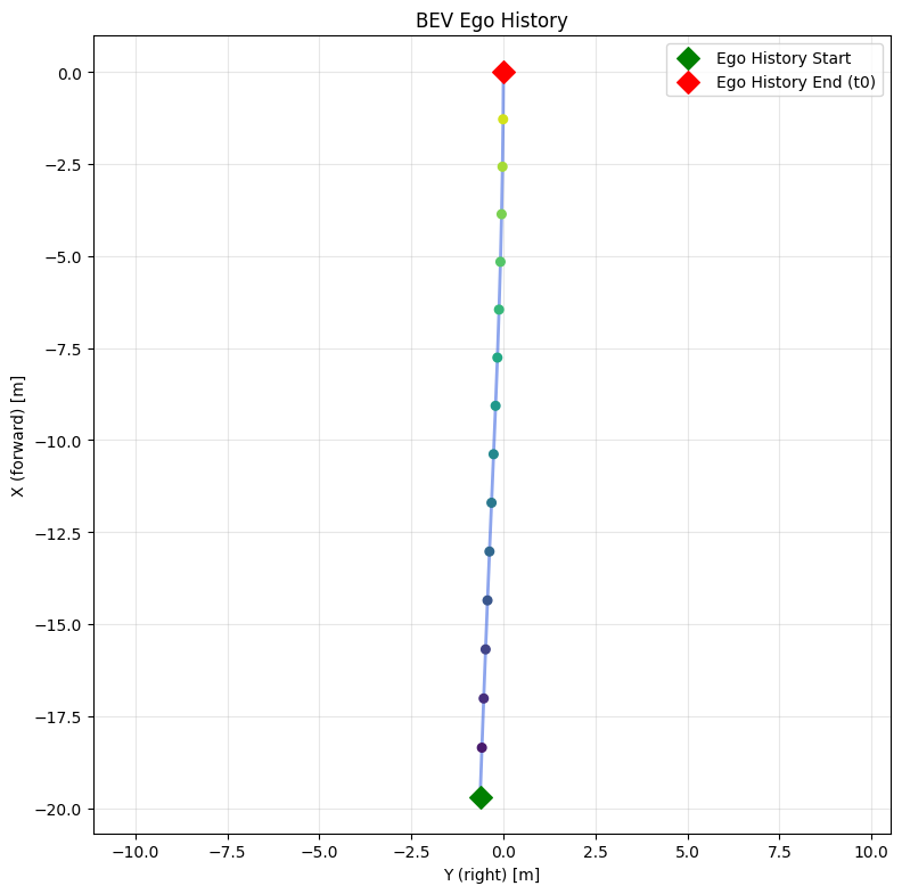

Scenario 2: night-time lane change at an intersection

The vehicle is approaching a night-time intersection where staying straight requires an active lane change to avoid a right-turn slip lane. Visibility is low. The model generates four candidate reasoning traces (one per predicted trajectory) all converging on the same safe decision.

Chain-of-Causation output – generated by Alpamayo 1 during our test:

- “Change to the left lane to stay in the through lane and avoid being forced into the right-turn slip lane at the upcoming intersection, while moderating speed to maintain gap to the lead vehicle and approach the marked crosswalk cautiously.“

- “Change lanes to the left to stay in the through lane and avoid being forced into the right-turn slip lane while keeping safe spacing from the lead vehicle and the pedestrian crossing area.“

- “Change to the left lane to pass the slower lead vehicle and maintain safer clearance from the right curb after the crosswalk.“

- “Change lanes left to pass a slower vehicle ahead in the right lane and maintain flow while approaching the pedestrian crossing area.“

This scenario is particularly relevant for testing generalisation: the model was not trained on this specific intersection geometry, yet all four predicted trajectories correctly execute the lane change and stay clear of the pedestrian crossing. The reasoning traces are consistent across all four candidates.

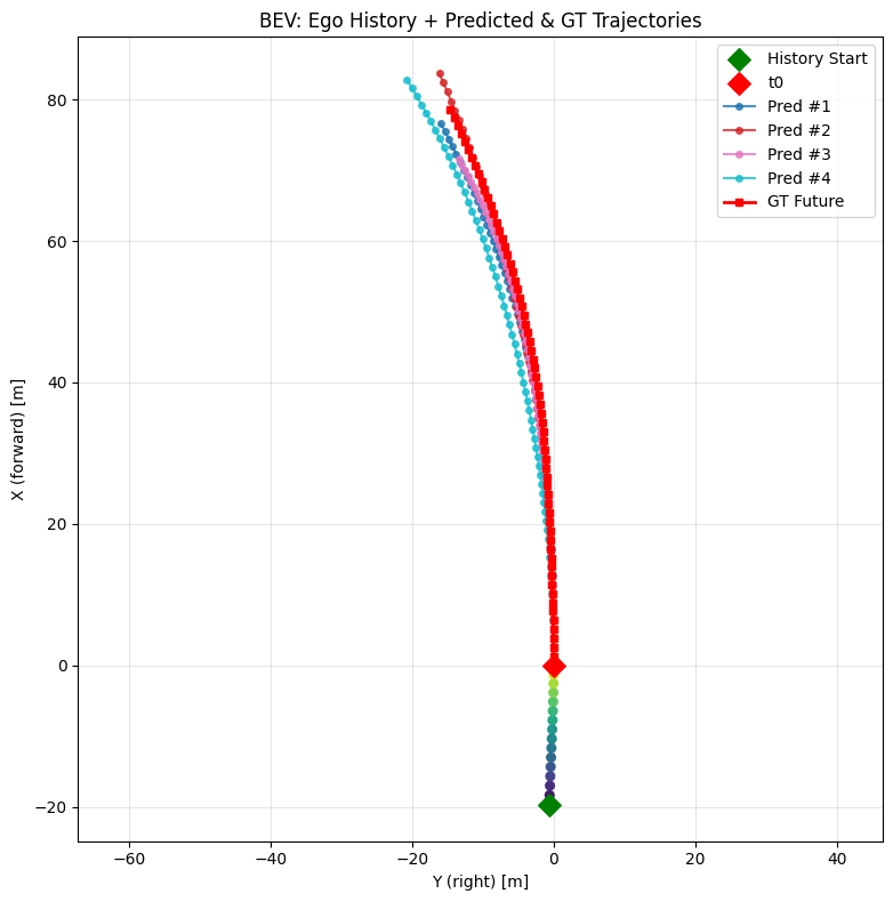

Scenario 3: highway curve speed adaptation

The vehicle is on a winding coastal highway. The road bends ahead. This scenario tests whether the model reasons about geometry, not just reacts to visible lane markings. All four generated reasoning traces say the same thing in slightly different words.

Chain-of-Causation output – generated by Alpamayo 1 during our test:

- “Adapt speed for the left curve ahead.“

- “Adapt speed for the curve because the road bends ahead.“

- “Adapt speed for the upcoming left curve.“

What matters here is the consistency: the model identifies the same physical constraint from four independently sampled trajectories, which indicates the reasoning is grounded in actual scene understanding rather than pattern-matching on curve geometry.

Insight from our DGX Spark tests: The model’s reasoning traces were immediately readable by non-ML engineers. This matters for safety review processes: you do not need to interpret neural network weights to understand what the system decided and why.

A direct answer to the regulatory question

The Chain-of-Causation output solves a problem that has blocked Level 4 certification in most markets: the black box.

The EU AI Act, emerging NHTSA guidance in the US, and equivalent frameworks across Asia all move toward the same requirement: safety-critical AI systems must be able to explain their decisions. Alpamayo produces that explanation natively, as part of every inference pass. It is built into the architecture.

The practical implications go further than compliance. Fleet operators can use reasoning traces to identify systematic gaps in model behaviour – not through expensive post-incident reconstruction, but by reading the model’s own output at scale. Insurance companies have concrete evidence to assess risk. Passengers in robotaxi deployments can receive plain-language explanations of what the vehicle is doing and why. That last point matters for public acceptance, which remains one of the underestimated barriers to commercial deployment.

Insight: Organisations that build on Alpamayo’s explainability architecture now will be ahead when mandatory explainability standards are enforced. Building explainability into a system after the fact is technically complex and expensive.

The Teacher-Student paradigm: how this scales to production

A 10-billion-parameter model runs on a data-centre GPU. It does not run in a vehicle. This is a known constraint, and Alpamayo is designed around it from the start.

Alpamayo 1 is the Teacher: a high-capability reasoning model that runs in cloud or on-premise infrastructure. It generates high-quality, labelled reasoning traces and trajectory outputs. Those outputs become the training data for Student models – smaller, optimised versions with 0.5 to 2 billion parameters that are designed to run on production automotive compute hardware, such as the DRIVE AGX Orin or the DRIVE Thor architecture.

The economic logic is straightforward. You pay the computational cost of full reasoning capability once, at training time, using the Teacher. The Student inherits those capabilities through distillation and runs at a fraction of the cost at inference time – fast enough for real-time driving decisions. This is the bridge between a research model and an in-vehicle deployment.

NVIDIA plans to release official distillation scripts in June 2026. That release is the production gateway for the entire ecosystem. Our own roadmap is built around it: using the distillation tools to compress the 10B teacher to a 0.5B–2B student model, then transitioning off the DGX Spark and deploying onto edge devices to measure memory footprint, power consumption, and inference latency. The target is a stable real-time inference rate – the sub-second response time that reactive autonomous driving requires. The end goal is a live, closed-loop demonstration inside a physical vehicle on DRIVE AGX Orin or Thor hardware, validating that the distilled model can perceive, reason, and drive safely in real-time.

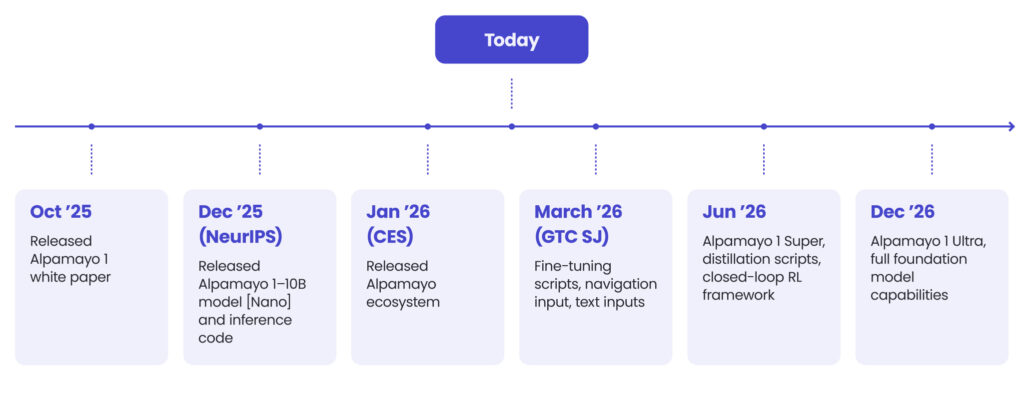

What NVIDIA’s 2026 roadmap signals to the industry

NVIDIA has published a clear, sequenced release plan for 2026. Each milestone addresses a specific gap in the path from model to production deployment.

| Milestone | Release | What ships |

| March 2026 | GTC San José | Fine-tuning scripts + support for navigation and text prompt inputs |

| June 2026 | Alpamayo 1 Super | Larger model + official distillation scripts + open-source closed-loop RL framework |

| December 2026 | Alpamayo 1 Ultra | Full-scale universal AV foundation model |

The March 2026 release at GTC San José delivers fine-tuning tooling and expanded prompt support. This signals that the base model is stable enough for organisations to begin adapting it to their own data. The June release is more significant: distillation scripts and a closed-loop reinforcement learning framework address what NVIDIA calls “embodiment misalignment” – the gap between a model that generates correct reasoning and one that executes correct actions. The fact that this is a dedicated June release indicates it remains the hardest open problem. The December Alpamayo Ultra release frames the end state: a universal foundation model for autonomous vehicles, analogous to what a large language model is for text.

For decision-makers, the roadmap creates a specific action window. The six months between March and June 2026 are the optimal period to build fine-tuning capability and data pipelines on the base model, before the distillation tools ship and the production race begins in earnest.

Three questions before your next AV investment

Alpamayo does not make autonomous vehicle development easy. It changes which problems are hard. Organisations that understand this distinction will allocate resources more effectively. Here is a three-question framework for evaluating your current position:

| Strategic question | Operational test | What it means |

| Does your AV stack explain its decisions? | Can your team audit what the system did (and why) after the fact? | Chain-of-Causation logs will be required for regulatory approval in most markets. Build for this now. |

| Can your edge hardware run inference in real-time? | What is your latency budget and can you meet it without cloud compute in the loop? | Plan for distilled models (0.5B–2B parameters). Do not architect around the 10B teacher model. |

| Are you building from scratch or integrating? | Do you have enough data to fine-tune, or should you use the open ecosystem? | NVIDIA’s Physical AI datasets lower the barrier significantly. Use them before building proprietary pipelines. |

Four actions to take now:

1. Assess your explainability gap

Review your AV stack for its ability to produce readable decision logs. If it cannot, decide whether explainability can be added as a layer or requires architectural changes. That answer determines your integration path.

2. Start building on the open ecosystem before the June tooling release

The Physical AI datasets and Alpamayo 1 model weights are available today. Begin building data pipelines and evaluation workflows now – the advantage of an earlier start compounds quickly in machine learning.

3. Design for edge hardware from the start

The 10B parameter model is a training tool, not a deployment target. Define your target platform, memory constraints, and latency requirements before starting customisation work – not after.

4. Engage regulators proactively

Alpamayo’s reasoning traces give you a readable, auditable log of model decisions. Use this to start conversations with regulatory bodies now. Organisations that help shape explainability standards will be better positioned than those that wait to comply.

Use AI in Automotive processes to reduce time-to-market by up to 45%

Get the ebookConclusion

The move from pattern recognition to explicit reasoning addresses two structural problems at the architectural level – the long tail of edge cases and the black-box opacity that blocks certification.

NVIDIA’s team has demonstrated it – and we have verified it ourselves – in closed-loop simulation on real-world scenarios (stopping at red lights, navigating night-time intersections, handling highway curves) producing reasoning traces that are coherent, auditable, and directly tied to physical trajectory outputs. The open-source release means the ecosystem can move now, not after a proprietary licensing negotiation.

The window for strategic positioning is specific and finite. The fine-tuning release in March 2026, the distillation framework in June, and the Alpamayo Ultra foundation model in December define a development arc that will look, in hindsight, like the 18 months when the production landscape for autonomous vehicles was decided.

The reasoning layer has arrived. The question is: is your organisation ready to build on it?

That is where Spyrosoft comes in, helping automotive and mobility organisations integrate NVIDIA’s AI stack. Our engineers have hands-on experience with Alpamayo on DGX Spark hardware and can help you assess your readiness, define your strategy, and get to a working prototype faster. Fill out the form below to learn more.

arrow_circle_rightContact us

Curious where to start? We are happy to help you assess your options.

arrow_circle_right Our articles